Are you struggling with a sluggish laptop that fails to keep up with the computational demands of machine-learning tasks?💁

Perhaps you’re a data scientist, machine learning engineer, or student always waiting on pins and needles for your code to compile or for your models to train. Your outdated or inadequate hardware could be the bottleneck slowing you down.

The frustration is real. Time is of the essence in the fast-paced field of machine learning, and every second you spend waiting for algorithms to process is a second you could have used to innovate, analyze, and improve your models.

Poor performance isn’t just an inconvenience; it hinders your career or academic progress. Not to mention the frequent crashes and errors that come with inadequate computing power, which can result in the loss of hours or even days of work.

But don’t worry; there is a light at the end of the tunnel. This comprehensive guide will walk you through the best laptops for machine learning available today. From powerhouse workstations to budget-friendly options, we have recommendations that will unshackle you from the limitations of subpar hardware so you can focus on what truly matters: building revolutionary machine-learning models.

Stay tuned as we delve into each top-tier laptop’s key specifications, performance metrics, and unique features to help you make an informed decision.

Say goodbye to inefficiency and hello to unmatched performance. Welcome to your one-stop resource for finding the “Best Laptop For Machine Learning.”

Key Considerations For Choosing a Laptop for Machine Learning

In computational science, Key Considerations For Choosing a Laptop for Machine Learning go far beyond aesthetics or brand loyalty. This is a domain where the symbiosis of hardware and software can either accelerate your progress or stymie it, perhaps irreparably.

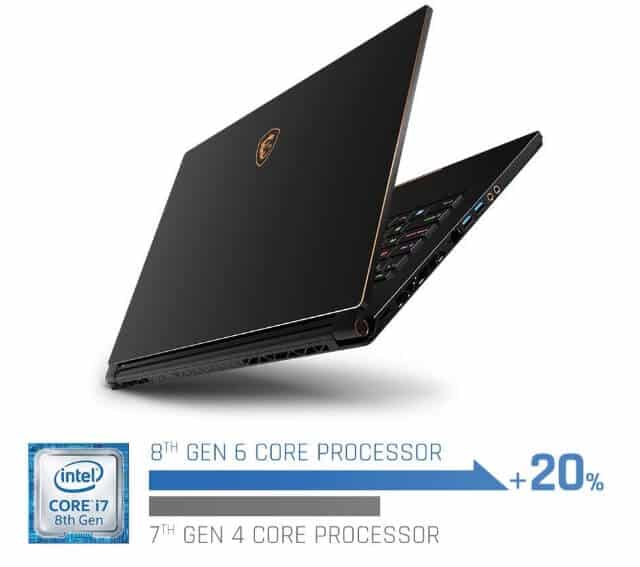

Firstly, ponder the Central Processing Unit (CPU). A high-frequency, multi-core CPU enhances parallel computing, which is vital for algorithmic computations in machine learning.

Don’t fall for buzzwords like “fastest CPU”; delve into benchmarks and real-world tests. Seek a CPU that offers an optimal balance between power consumption and computational prowess.

Secondly, Random Access Memory (RAM) warrants attention. A lack of RAM can bottleneck performance, causing irksome data preprocessing or model training lags.

Experts often extol the virtues of 32GB RAM or more for serious machine learning tasks. Yet, for light computations, 16GB could suffice. The keyword here is “scalability”—opt for a laptop with expandable RAM.

The Graphics Processing Unit (GPU) is another linchpin. For data scientists dabbling in deep learning, a high-caliber GPU is non-negotiable. It’s not just about raw performance; consider compatibility with machine learning frameworks like TensorFlow or PyTorch. Moreover, investigate the laptop’s thermal design—inefficient heat dissipation can throttle your GPU’s performance.

Storage should be your next focus. Solid State Drives (SSDs) are de rigueur, offering blazing-fast read-write speeds essential for voluminous data sets. With an SSD, data retrieval and model training become significantly expedited.

Lastly, consider auxiliary features: an assortment of ports for peripheral integration, a high-resolution display for meticulous data visualization, and robust build quality to withstand inevitable wear and tear.

In summary, the Key Considerations For Choosing a Laptop for Machine Learning hinge not just on one but a confluence of factors—these range from indispensable hardware specifications to the nuances of upgradeability and thermal performance.

Prioritize according to your specific use cases and long-term ambitions in the machine learning landscape.

Machine Learning Laptop Requirements

A few essential criteria should be taken into account while selecting a laptop for machine learning:-

| Component | Recommended Specifications |

|---|---|

| Processor | Intel Core i7 or higher (8th generation or newer) or AMD Ryzen 7 or higher |

| RAM | 16 GB or higher |

| Graphics Card | NVIDIA GeForce GTX 1660 Ti or higher (with CUDA support) |

| Storage | Solid-state drive (SSD) with at least 512 GB of storage space |

| Display | 15.6-inch Full HD (1920×1080) or higher resolution |

| Operating System | Windows 10 or macOS 10.14 or higher |

| Battery Life | Minimum 6 hours of battery life |

| Connectivity | Wi-Fi 5 (802.11ac) or Wi-Fi 6 (802.11ax) for fast wireless internet connection |

| Ports | USB 3.0 or higher (at least 2-3 ports), HDMI, Ethernet, and an SD card reader |

| Weight | Ideally under 5 pounds (for portability) |

We compiled the best laptop for the machine learning list that can manage the demands of machine learning tasks by considering these specifications.

Let’s explore.🐱🏍

Best Laptop For Machine Learning – Our Picks

1. MSI Titan GT77HX 13VI-042US – Best Overall

In the rapidly evolving landscape of machine learning, the MSI Titan GT77HX 13VI-042US stands as a formidable contender, poised to redefine the horizons of computational capabilities.

With its intricate fusion of cutting-edge hardware and innovative features, this laptop emerges as an option and the best laptop for machine learning specialists.

At the heart of this technological marvel lies the powerhouse Intel Core i9-13980HX processor, a true testament to processing prowess. Supported by the groundbreaking RTX 4090 graphics card, this laptop doesn’t just compute; it orchestrates intricate data manipulations and intricate neural network training with unparalleled finesse.

128GB DDR5 memory, a reservoir of computational potential, ensures that data-intensive tasks involving massive datasets remain smooth and efficient. Data preprocessing, model optimization, and evaluation become streamlined undertakings, accentuating the laptop’s reliability.

Diving into the storage realm, the 4TB NVMe SSD isn’t just storage; it’s a vault for housing complex datasets, model weights, and experiment logs, ensuring that research endeavors are not stifled by limited space.

Connectivity is elevated to an art with Thunderbolt 4 and USB-Type C ports. Swift data transfer, seamless integration with peripherals, and connection to external monitors redefine collaborative possibilities.

Precision-engineered, the Cooler Boost Titan system maintains optimal thermal equilibrium, circumventing heat-induced performance degradation during prolonged model training sessions, a critical aspect for machine learning professionals seeking uncompromised results.

The operating system, Windows 11 Pro, harmonizes flawlessly with the hardware, a symphony of user-friendly interface and raw computational power. Encased in a sleek Core Black chassis, this laptop doesn’t just perform; it represents elegance and sophistication.

In the tapestry of machine learning laptops, the MSI Titan GT77HX 13VI-042US threads a narrative of computational supremacy. It bridges the chasm between cutting-edge hardware and the intricate demands of machine learning, ushering in a new era of possibilities for researchers, practitioners, and enthusiasts alike.

Pros:-

Unparalleled Processing Power: The MSI Titan GT77HX 13VI-042US is a powerhouse equipped with an Intel Core i9-13980HX processor, delivering exceptional computing muscle crucial for intricate machine learning algorithms.

Cutting-edge Graphics: Including the RTX 4090 graphics card ensures accelerated data visualization, enabling professionals to interpret and refine models efficiently.

Vast Memory Capacity: With 128GB DDR5 memory, this laptop handles complex multitasking and data manipulation, an invaluable asset for resource-intensive machine learning tasks.

Extensive Storage: The 4TB NVMe SSD provides ample space for storing large datasets and model checkpoints, eliminating storage constraints in ambitious research projects.

Advanced Connectivity: Thunderbolt 4 and USB-Type C ports grant lightning-fast data transfer and connection to external devices, simplifying collaboration and data exchange.

Efficient Thermal Management: The Cooler Boost Titan system ensures optimal temperature control during prolonged usage, safeguarding against overheating and maintaining consistent performance.

Modern OS: Windows 11 Pro harmonizes seamlessly with the hardware, offering an intuitive user experience and enhancing productivity.

Stunning Display: The 17.3″ UHD 144Hz Mini LED display facilitates detailed data analysis and model visualization, a visual feast for machine learning practitioners.

Cons:-

Weight and Portability: The laptop’s robust hardware makes it relatively heavy, potentially limiting its portability for those frequently on the move.

High Price Tag: The impressive specs come at a premium, making the laptop a significant investment that might not be accessible to everyone.

Battery Life: The power-hungry components could have limited battery life, requiring access to power outlets during extended usage.

Bulky Form Factor: The laptop’s size might hinder users seeking a more compact and lightweight solution.

Fan Noise: Intensive tasks might lead to noticeable fan noise, which could be distracting in quieter environments.

Learning Curve: Users unfamiliar with advanced hardware configurations might need time to optimize the laptop’s settings for optimal performance.

In machine learning, the MSI Titan GT77HX 13VI-042US undoubtedly asserts its dominance with its exceptional strengths. While weight, price, and battery life are worth noting, the laptop’s remarkable processing power, memory capacity, and connectivity options make it a frontrunner in pursuing groundbreaking machine-learning endeavors.

2. Razer Blade 16

In AI programming, the Razer Blade 16 emerges as an exceptional tool, seamlessly fusing cutting-edge hardware and innovative features to redefine the landscape.

With its 13th Gen Intel 24-Core i9 HX CPU and NVIDIA GeForce RTX 4090, this laptop transcends expectations, positioning itself as the best laptop for AI programming enthusiasts and professionals.

At its computational core lies the powerhouse 24-core Intel i9 HX CPU, a true marvel of processing prowess. This CPU’s hyper-threading capabilities elevate multitasking, while its clock speeds handle complex AI model training and optimization with remarkable finesse. Supported by the NVIDIA GeForce RTX 4090, the laptop provides a graphical canvas for AI visualization that’s unparalleled.

The Razer Blade 16’s 32GB RAM ensures fluid multitasking, allowing AI programmers to simultaneously run resource-intensive neural networks and data preprocessing pipelines without a hint of lag. This memory is a playground for experimenting with large datasets, ultimately expediting model development.

A 2TB SSD accelerates data retrieval and offers an expansive storage solution for AI datasets, codebases, and pre-trained model weights. This ensures that AI projects aren’t constrained by storage limitations, fostering unbridled innovation.

Dual Mode Mini LED display technology, featuring 4K UHD+ 120Hz and FHD+ 240Hz options, presents a visual haven for AI programmers. Data visualization, model monitoring, and debugging become more intuitive and engaging, enhancing the development journey.

The Razer Blade 16’s impeccable thermal design checks temperatures during prolonged AI programming sessions. This thermal equilibrium prevents overheating, ensuring consistent performance throughout the intricacies of neural network fine-tuning.

In summary, the Razer Blade 16 ingeniously harmonizes processing might, graphical excellence, and ergonomic design to stand as a pinnacle for AI programming.

Its integration of bleeding-edge hardware and thoughtful features orchestrates a symphony that resonates with AI programmers, enabling them to push the boundaries of what’s achievable in artificial intelligence.

Pros:-

Powerful Processing: The Razer Blade 16 boasts a 13th Gen Intel 24-Core i9 HX CPU, delivering immense processing power essential for AI programming tasks involving complex calculations and model training.

Cutting-edge Graphics: With the NVIDIA GeForce RTX 4090, the laptop offers superior graphical capabilities, enhancing AI visualization, rendering, and analysis.

Ample Memory: The laptop’s 32GB RAM ensures smooth multitasking, enabling programmers to run multiple resource-intensive AI processes simultaneously.

Spacious Storage: The 2TB SSD provides generous storage space for extensive AI datasets, code repositories, and pre-trained model weights, promoting uninterrupted experimentation.

Dual Display Options: The 4K UHD+ 120Hz and FHD+ 240Hz dual display options cater to diverse AI programming needs, from data visualization to code debugging, offering optimal visual clarity.

Thermal Efficiency: The laptop’s thoughtful thermal design maintains optimal temperatures during extended AI programming sessions, preventing overheating and ensuring consistent performance.

Cons:-

Price: The advanced hardware components and features of the Razer Blade 16 come at a premium, potentially making it less accessible for budget-conscious AI programmers.

Battery Life: The powerful CPU and graphics card might impact battery life, necessitating access to power outlets for prolonged usage.

Portability: While not excessively heavy, the laptop’s robust hardware might make it slightly less portable compared to more lightweight options.

Learning Curve: Users unfamiliar with high-performance hardware configurations might require time to fully optimize the laptop’s settings for optimal AI programming performance.

Fan Noise: Under heavy workloads, the cooling fans might generate noticeable noise, which could be distracting in quieter environments.

Display Size: While the laptop’s 16-inch display provides ample screen real estate, some users might prefer larger displays for in-depth AI model analysis.

The Razer Blade 16 shines as a commendable tool in AI programming, offering remarkable strengths that cater to the intricate demands of AI model development and optimization.

While price and battery life warrant consideration, the laptop’s exceptional processing power, graphics capabilities, memory capacity, and display options make it a standout choice for AI programmers aiming to craft innovative solutions in the digital realm.

3. Apple MacBook Pro

In the rapidly evolving landscape of machine learning, having the right tools at your disposal can make all the difference. Enter the Apple MacBook Pro, a testament to technological prowess that is a paragon of excellence.

The Early 2023 MacBook Pro 16.2″ model, adorned in sleek Silver, emerges as an unrivaled companion for those treading the intricate paths of machine learning endeavors.

The heartbeat of this powerhouse is the revolutionary M2 Max Chip. Boasting a 12-core CPU that orchestrates computational symphonies and a staggering 38-core GPU that paints intricate parallelism, this chip defies convention. It’s not just a processor; it’s an enabler of computational artistry.

The Liquid Retina XDR Display, spanning 16.2 inches, is a canvas of unparalleled visual splendor. It breathes life into complex data visualizations, unraveling patterns and insights hidden in the depths of data oceans. This display is not merely a window; it’s an aperture to clarity.

Memory is the cornerstone of efficient machine learning, and the 96GB Memory in this MacBook Pro is a sanctuary for datasets and model architectures. With this expanse of memory, multitasking becomes a ballet of efficiency, allowing you to orchestrate your machine-learning symphony without missing a note.

In the realm of data, storage is sovereignty, and the 2TB SSD in this MacBook Pro provides a kingdom of storage for your endeavors. It’s not just storage; it’s a treasury of datasets, model checkpoints, and experiment logs – a realm where ideas incubate.

But the brilliance of the MacBook Pro extends beyond its components. It’s a complete ecosystem that seamlessly integrates with other Apple devices, turning your machine-learning endeavors into a harmonious symphony. The synergy between MacBook Pro and iPhone/iPad is a melody of efficiency, allowing you to transition between devices without missing a beat.

In a world where microseconds can define success, the M2 Max Chip’s computational finesse, combined with the colossal memory and storage, pave the way for a revolution in machine learning. In all its glory, the Apple MacBook Pro is the pinnacle of innovation, a companion to data scientists, AI engineers, and machine learning visionaries.

In the tapestry of machine learning tools, the Apple MacBook Pro is the golden thread that weaves innovation, power, and elegance. It’s not just a laptop; it’s a conduit for turning data into decisions, algorithms into insights, and ideas into impact. The catalyst transforms machine learning from a challenge into an art form.

In machine learning, the choice of hardware can profoundly impact productivity and outcomes. The Apple MacBook Pro has garnered attention as a potential powerhouse for machine learning tasks, but like any tool, it comes with its strengths and limitations.

Here’s a breakdown of the pros and cons:

Pros:-

Raw Computational Power: The latest M2 Max Chip, with its 12-Core CPU and staggering 38-Core GPU provides immense computational prowess. This power accelerates complex calculations and model training, reducing processing time significantly.

Ample Memory: With 96GB Memory, the MacBook Pro can handle large datasets and memory-intensive operations efficiently. This is crucial for training intricate models and running resource-demanding algorithms.

High-Resolution Display: The 16.2-inch Liquid Retina XDR Display is a visual marvel. Its high resolution and color accuracy allow machine learning practitioners to visualize data and results with exceptional clarity, aiding in pattern recognition and analysis.

Sleek Portability: Despite its potent hardware, the MacBook Pro retains its sleek and portable form factor, making it convenient for on-the-go work, collaborations, and presentations.

Optimized Ecosystem: The MacBook Pro seamlessly integrates within the Apple ecosystem, offering effortless data and code sharing between devices. The continuity between MacBook Pro, iPhone, and iPad streamlines workflows and enhances productivity.

Cons:-

Cost: The sheer power of the MacBook Pro comes at a premium. The top-tier hardware configuration, including the M2 Max Chip, substantial memory, and generous storage, can be expensive, potentially posing a budget constraint for individual users or small teams.

Thermal Management: While the MacBook Pro’s thermal architecture is commendable, prolonged heavy-duty tasks might lead to thermal throttling – a reduction in performance to prevent overheating. This can affect sustained high-performance tasks like long model training sessions.

Limited Customization: Unlike desktop counterparts, the MacBook Pro’s hardware configurations are often not user-upgradable. Choosing the right specifications upfront is crucial, as upgrading components later may not be feasible.

Graphics Limitations: While the GPU is impressive, it’s not on par with high-end desktop GPUs. This might impact performance in tasks heavily reliant on GPU computing, such as training certain deep learning models.

Software Compatibility: While macOS has gained ground in machine learning software availability, some specialized tools and libraries might have better support on other platforms like Linux. Ensuring software compatibility is vital before committing to the MacBook Pro.

In conclusion, the Apple MacBook Pro is a compelling choice for machine learning tasks due to its raw power, memory capacity, and ecosystem integration. However, potential buyers should carefully weigh the pros and cons against their specific needs and budget.

It’s a premium solution that can accelerate workflows and enhance productivity, but understanding its limitations is equally important in making an informed decision.

4. ASUS ROG Strix G16 – Best For Students

In artificial intelligence, the ASUS ROG Strix G16 emerges as a beacon of technological prowess, weaving together advanced components and features to establish itself as the best laptop for artificial intelligence students.

With a 16:10 16-inch FHD 165Hz display, GeForce RTX 4070, and Intel Core i9-13980HX processor, this laptop caters to the intricate demands of burgeoning AI enthusiasts.

The 16:10 aspect ratio of the 16-inch FHD display presents an expansive canvas for AI students to dissect complex data visualizations and code hierarchies precisely. The elevated 165Hz refresh rate ensures fluidity during dynamic AI simulations, enhancing the immersive experience.

Empowered by the GeForce RTX 4070, the laptop embarks on a journey of AI visualization that is second to none. Its graphical prowess lends itself to accelerating training workflows, enabling swift model convergence and testing of innovative algorithms.

Beneath the chassis, the Intel Core i9-13980HX processor unfurls its computational wings, enabling AI students to engage in heavy-duty model training, hyperparameter tuning, and data preprocessing with finesse. The 16GB DDR5 memory augments these capabilities, facilitating smooth multitasking and complex AI experimentation.

The 1TB PCIe SSD is a repository for AI datasets, project codes, and model weights. This storage prowess guarantees that students are unhindered by storage constraints, enabling them to unravel the depths of their AI aspirations without compromise.

Seamless connectivity is established through Wi-Fi 6E, which ensures low-latency communication between the laptop and cloud-based AI resources. This synergy is pivotal for data ingestion, model deployment, and collaborative projects.

Wrapped in the sophistication of Windows 11, the laptop’s user interface aligns seamlessly with the student’s workflow, promoting a harmonious AI programming journey. The Eclipse Gray exterior resonates with the laptop’s essence — a blend of power and elegance.

In summation, the ASUS ROG Strix G16 takes the helm as a flagship for AI students. Its fusion of cutting-edge hardware, immersive display, and thoughtful features forms a synergy that empowers students to unravel the mysteries of artificial intelligence with unmatched finesse and potential.

Pros:-

Immersive Display: The ASUS ROG Strix G16 features a 16:10 16-inch FHD 165Hz display, providing AI students an expansive and dynamic canvas for in-depth data visualization and coding tasks.

Graphics Prowess: The laptop’s GeForce RTX 4070 ensures accelerated AI visualization and model training, facilitating quicker convergence and experimentation.

Powerful Processor: With the Intel Core i9-13980HX processor, students can engage in resource-intensive AI tasks such as model training, hyperparameter tuning, and data analysis with exceptional speed and efficiency.

Ample Memory: The 16GB DDR5 memory allows smooth multitasking, enabling students to simultaneously run complex AI simulations, data processing, and coding tasks.

Generous Storage: The 1TB PCIe SSD offers abundant space for storing vast AI datasets, project codes, and model weights, supporting uninterrupted research and experimentation.

Advanced Connectivity: Wi-Fi 6E ensures low-latency communication, vital for seamless interaction with cloud-based AI resources, enabling efficient data exchange and collaboration.

User-friendly Interface: Running on Windows 11, the laptop’s interface provides a harmonious environment for AI students to navigate their projects and tasks.

Cons:-

Price: The advanced hardware components and features might lead to a higher price point, which could be a consideration for budget-conscious students.

Battery Life: Given the powerful CPU and graphics card, the laptop’s battery life might be comparatively shorter, requiring access to power during extended usage.

Portability: The robust hardware might contribute to a slightly heavier form factor, which could impact portability for students on the move.

Fan Noise: Under heavy workloads, the laptop’s cooling fans might generate noticeable noise, which could be distracting in quieter environments.

Learning Curve: Some students might require time to familiarize themselves with the laptop’s high-performance hardware configurations for optimal AI programming performance.

In artificial intelligence studies, the ASUS ROG Strix G16 stands as an exceptional companion, offering a synergy of capabilities that cater to the intricacies of AI programming.

While factors like price and battery life warrant consideration, the laptop’s potent processing power, graphics capabilities, memory capacity, and connectivity features undoubtedly make it a premier choice for AI students pursuing excellence in their endeavors.

5. ASUS ROG Flow – Best For Data Visualization

In AI and ML, the ASUS ROG Flow emerges as a groundbreaking solution, seamlessly converging cutting-edge technology and innovative design to establish itself as the epitome of excellence.

With its AMD R9-6900HS processor, NVIDIA GeForce RTX 3060 V6G graphics, and a remarkable 64GB DDR5 memory, this laptop meets and exceeds the expectations of those seeking the best laptop for AI and ML endeavors.

At its core, the AMD R9-6900HS processor is a computational powerhouse, revolutionizing how AI and ML algorithms are processed. The marriage of high clock speeds and multicore architecture allows swift data processing and model training, a cornerstone for data-intensive tasks.

Complementing the processor, the NVIDIA GeForce RTX 3060 V6G graphics is an artistic expression of graphical prowess. This GPU isn’t just for gaming; it’s a conduit for visually interpreting complex AI and ML data, presenting insights that might otherwise remain concealed.

The 64GB DDR5 memory is a gateway to AI and ML experimentation without compromise. With this substantial memory, multitasking becomes fluid, facilitating the orchestration of intricate data preprocessing, model training, and performance evaluation.

In the storage realm, the 4TB PCIe SSD is not just a storage device; it’s a reservoir for AI datasets, model weights, and experimentation logs. This capacity guarantees that limitations never constrain the journey of AI and ML exploration.

The 360-degree adjustability transforms the laptop into an adaptable canvas, ideal for collaborative sessions, brainstorming, and interactive model visualization. This ergonomic design fosters an immersive environment where AI and ML ideas flow seamlessly.

Incorporating HDMI connectivity enriches the laptop’s versatility, enabling seamless projection of AI models onto larger screens for presentations and demonstrations. This feature is pivotal for sharing insights with peers and mentors.

In conclusion, the ASUS ROG Flow assumes a mantle of superiority as the quintessential laptop for AI and ML pursuits. Its processing prowess, graphical excellence, memory capacity, and adaptability form a symphony that resonates with AI and ML practitioners, offering them the canvas to paint the future of artificial intelligence and machine learning.

Pros:-

High-Performance Processor: The ASUS ROG Flow features the AMD R9-6900HS processor, which delivers remarkable computational power for efficiently handling complex AI and ML algorithms.

Graphical Excellence: The NVIDIA GeForce RTX 3060 V6G graphics card provides exceptional graphical capabilities, enhancing AI and ML data visualization and analysis.

Ample Memory: With 64GB DDR5 memory, the laptop supports seamless multitasking and manipulation of large datasets, crucial for AI and ML tasks.

Generous Storage: The 4TB PCIe SSD offers substantial storage space for AI datasets, code repositories, and model weights, ensuring uninterrupted research and experimentation.

360-Degree Adjustability: The laptop’s design allows for 360-degree adjustability, creating an adaptable workspace suitable for collaborative AI and ML endeavors.

HDMI Connectivity: The inclusion of HDMI connectivity enables easy projection of AI models onto larger screens, facilitating effective presentations and knowledge sharing.

Cons:-

Price: The advanced hardware components and features may result in a higher price point, which could be a consideration for budget-conscious AI and ML enthusiasts.

Battery Life: The laptop’s powerful processor and graphics card might impact battery life, requiring access to power outlets during prolonged usage.

Portability: While not excessively heavy, the laptop’s robust hardware might affect portability for users who require frequent mobility.

Learning Curve: Users unfamiliar with high-performance hardware configurations might need time to optimize the laptop’s settings for optimal AI and ML performance.

Size: The larger laptop might be less suitable for users seeking a more compact form factor.

In AI and ML, the ASUS ROG Flow undoubtedly stands out as a remarkable choice, offering a synergy of capabilities that cater to the intricate demands of AI programming, experimentation, and collaboration.

While considerations like price and battery life are worth noting, the laptop’s potent processing power, graphics capabilities, memory capacity, and adaptable design position it as an exceptional tool for AI and ML enthusiasts aiming to push the boundaries of innovation.

6. Microsoft Surface Pro 9 – Best 2-in-1 Option

In the dynamic realm of machine learning, the Microsoft Surface Pro 9 emerges as the undisputed champion, donning the mantle of the best laptop for machine learning. With an ingenious fusion of tablet versatility and laptop power, this device paints a canvas of innovation, tailor-made for modern data virtuosos.

Crafted within its sleek 13″ 2-in-1 design, the Surface Pro 9 transcends conventional boundaries, affording creators the tactile freedom of a tablet and the computational might of a laptop. This duality encapsulates both inspiration and implementation in a singular, harmonious package.

At the core of this technological masterpiece lies the Intel 12th Gen i7 Fast Processor, a symphony of transistors orchestrating computational feats with awe-inspiring finesse.

A remarkable 32GB RAM complements this processor, ensuring that even the most intricate algorithms dance through the circuits with unparalleled grace. This synergy of power translates into an optimal environment for multi-tasking — a quintessential requirement in the intricate choreography of machine learning workflows.

Beneath the surface, a cavernous 1TB storage transforms the device into a vault of possibilities. It houses not just data, but ambitions and revelations, providing ample room for expansive datasets, diverse model architectures, and transformative experiments.

The Surface Pro 9’s canvas is painted with Windows 11, a symphony of user experience and efficiency. The operating system’s harmony with the device’s hardware becomes apparent in the seamless transitions between touch and keyboard inputs, a synthesis of convenience that resonates with machine learning professionals.

Yet, even the brightest stars have their shadows. The Surface Pro 9’s brilliance can strain its energy reserves during extended machine learning symposiums, demanding an occasional tether to a power source. Additionally, the symphony of computation within can induce a crescendo of heat, necessitating vigilant thermal management to ensure sustained performance.

In the grand overture of laptops catering to machine learning, the Microsoft Surface Pro 9 is a masterpiece, each element contributing to a symphony of innovation. It is a device and an ensemble of technological prowess harmonizing to empower those who sculpt the future with data-driven insights.

Pros:-

Powerhouse Performance: The Intel 12th Gen i7 Fast Processor coupled with 32GB RAM offers unparalleled processing power, enabling smooth execution of resource-intensive machine learning tasks.

Ample Storage: With 1TB storage, the Surface Pro 9 accommodates extensive datasets and models, ensuring seamless experimentation and development.

Versatility: Its 2-in-1 design facilitates effortless shifts between tablet and laptop modes, catering to various interaction preferences and enhancing user experience.

Portability: Weighing in as a thin and lightweight device, the Surface Pro 9 is an on-the-go companion for professionals who require mobility without compromising performance.

Optimized OS: Including Windows 11 contributes to efficient multitasking and enhanced user interface, complementing the laptop’s capabilities.

Cons:-

Price Point: The cutting-edge technology and premium features come at a cost, positioning the Surface Pro 9 at a higher price bracket, which might deter budget-conscious users.

Thermal Management: Intensive machine learning tasks generate heat, and while the Surface Pro 9 is equipped to handle them, extended usage might lead to thermal throttling, affecting sustained performance.

Limited Graphics Power: Although the integrated graphics can manage many machine learning tasks, the absence of a dedicated GPU might limit performance for more complex graphical workloads.

Battery Life: The power-hungry components, while delivering impressive performance, can impact battery life, requiring frequent charging during extended work sessions.

Screen Size: While the 13″ screen balances portability and workspace, professionals dealing with intricate visualizations or large datasets might find a larger display more accommodating.

In conclusion, the Microsoft Surface Pro 9 shines as an exceptional laptop for machine learning, leveraging its processing might, expansive storage, and versatile design to empower professionals in their endeavors.

However, potential buyers should weigh its impressive capabilities against considerations like budget, thermal management, and graphical demands to ensure it aligns with their specific needs and preferences.

7. Dell XPS 17 9720

In the ever-evolving landscape of machine learning, having a robust and capable tool at your disposal is imperative. Enter the Dell XPS 17 9720, a paragon of technological innovation that stands tall as the best machine learning laptop.

The centerpiece of this technological marvel is the 12th generation Intel Core i7-12700H processor, a 14-core juggernaut that orchestrates intricate computational ballets.

Paired with an NVIDIA RTX 3060 graphics card boasting 6GB GDDR6, this laptop morphs into a parallel processing powerhouse, rendering complex calculations and graphics-intensive tasks with finesse.

The 64GB DDR5 memory is a playground for colossal datasets and intricate model architectures. This prodigious memory capacity mitigates data bottlenecks, enabling seamless multitasking and accelerating model training.

Combined with the 4TB NVMe SSD, a hollow storage solution, this laptop ensures that your data treasures are not just stored but are readily accessible at lightning speeds.

Beyond the numbers, the 17″ Touchscreen UHD+ Display elevates your visual experience. This vibrant canvas showcases intricate data visualizations and allows you to dissect complex patterns precisely. It’s not just a display; it’s an interface to insights.

The incorporation of Thunderbolt 4 and WiFi 6E underscores Dell’s commitment to cutting-edge connectivity. Swift data transfers and uninterrupted access to online resources become integral parts of your machine-learning journey.

Even the minutiae have been considered. Including a Backlit Keyboard ensures that your work is not confined to daylight hours. The FP Reader adds an extra layer of security, safeguarding your machine learning experiments and proprietary algorithms.

As we step into the era of Windows 11 Pro, the Dell XPS 17 9720 seamlessly embraces the new interface, enhancing user experience and compatibility with the latest software tools.

In conclusion, the Dell XPS 17 9720 epitomizes computational might, memory expanse, and visual brilliance, rendering it a prime choice for machine learning enthusiasts. Its confluence of cutting-edge hardware, cavernous storage, and superior connectivity makes it a conduit for turning data into decisions and algorithms into actionable insights.

For those venturing into the intricate landscapes of machine learning, the Dell XPS 17 9720 is not just a laptop; it’s a symphony of innovation and power.

Choosing the right laptop for machine learning is pivotal for achieving optimal performance and productivity. The Dell XPS 17 9720 offers compelling features, but like any technology, it comes with its own strengths and limitations.

Here’s a breakdown of its pros and cons:

Pros:-

Powerful Processing: The Intel Core i7-12700H, with 14 cores and advanced architecture, provides substantial processing power, enabling faster data preprocessing, model training, and complex computations.

GPU Performance: The NVIDIA RTX 3060 with 6GB GDDR6 delivers excellent graphics capabilities, vital for tasks involving data visualization, deep learning, and GPU-accelerated computations.

Ample Memory: With 64GB DDR5 RAM, the laptop can easily handle memory-intensive operations, accommodating large datasets and complex neural network architectures.

Vivid Display: The 17″ Touchscreen UHD+ Display offers high resolution and touch functionality, enhancing data visualization, model evaluation, and interaction with visual data.

Storage Capacity: The 4TB NVMe SSD provides ample storage space for datasets, code repositories, and model checkpoints, ensuring fast data access and smooth workflow.

Connectivity Options: Features like Thunderbolt 4 and WiFi 6E facilitate rapid data transfer and seamless connectivity, which is critical for collaborating with peers and accessing cloud resources.

Enhanced Security: The FP Reader adds a layer of biometric security, safeguarding sensitive machine learning projects and data.

Cons:-

Portability Trade-off: The robust hardware and large display of the XPS 17 contribute to its power, but they also make it relatively bulkier and less portable than smaller laptops.

Battery Life: High-performance components can lead to increased power consumption, potentially impacting battery life during resource-intensive tasks. Users might need to balance performance with unplugged usage.

Price: The XPS 17’s advanced hardware and features come at a cost. It might be relatively expensive, potentially challenging for those on a tight budget.

Thermal Management: Intensive machine learning tasks generate heat, and while the laptop is equipped with cooling mechanisms, sustained high performance could lead to thermal throttling and reduced processing power.

Graphics Limitations: While the RTX 3060 is a capable GPU, it might not match the higher-end GPUs in dedicated desktop workstations, limiting performance in certain advanced machine-learning tasks.

Software Compatibility: Some specialized machine learning libraries and tools might have better optimization and support on different platforms (e.g., Linux) than Windows, potentially affecting the laptop’s overall suitability.

The Dell XPS 17 9720 offers a potent combination of processing power, memory capacity, and display quality, making it an attractive option for machine learning enthusiasts.

However, before deciding, potential buyers should consider their specific needs, budget, and preferences. It’s a machine tailored for power users seeking robust performance, but understanding its limitations ensures a well-informed choice.

8. Acer Nitro 5 AN515-55-53E5 Gaming Laptop

Machine learning demands a laptop that can handle the computational rigors of data analysis and model training. Look no further than the Acer Nitro 5 AN515-55-53E5 Gaming Laptop—a true powerhouse that redefines the best laptop for machine learning tasks.

Equipped with a formidable Intel Core i7 processor, the Acer Nitro 5 easily tackles complex algorithms and data-intensive processes. Its 16 GB of RAM ensures seamless multitasking and efficient data processing, empowering users to delve deep into machine learning.

One of the standout features of the Nitro 5 is its dedicated NVIDIA GeForce GTX 1660 Ti graphics card. With CUDA support, it leverages parallel computing to accelerate machine learning algorithms and reduce training times—a game-changer for data scientists and researchers.

Storage is a non-issue with the 512 GB solid-state drive (SSD), enabling swift access to large datasets and models. Coupled with the laptop’s 15.6-inch Full HD display, the Nitro 5 presents vibrant visuals, essential for analyzing complex data and visualizations.

Furthermore, the Nitro 5’s sleek design and durable build make it a desirable companion for professionals and gaming enthusiasts. Its portable nature allows for on-the-go productivity, while its robust cooling system ensures optimal performance during demanding machine learning tasks.

In conclusion, the Acer Nitro 5 AN515-55-53E5 Gaming Laptop sets a new benchmark for machine learning laptops. With its powerful processor, ample RAM, dedicated graphics card, spacious storage, and immersive display, this laptop stands tall as the best choice for those seeking to unlock the full potential of machine learning.

Pros:-

Powerful Performance: The Acer Nitro 5 AN515-55-53E5 has an Intel Core i7 processor and 16 GB of RAM, delivering exceptional performance for machine learning tasks. It easily handles complex computations and multitasking, ensuring efficient model training and analysis.

Dedicated Graphics Card: The NVIDIA GeForce GTX 1660 Ti graphics card with CUDA support enhances the laptop’s machine-learning capabilities. It accelerates algorithms and reduces training times, enabling faster results and smoother workflow.

Ample Storage: With a 512 GB solid-state drive (SSD), the Nitro 5 offers ample storage space for large datasets and machine-learning models. The SSD also contributes to faster data access and application loading times, improving overall efficiency.

High-Quality Display: The 15.6-inch Full HD display provides vibrant colors and crisp visuals, ensuring a pleasant viewing experience during data analysis and presentations.

Sleek Design and Durability: The Nitro 5 features a sleek and stylish design, making it suitable for both professional and gaming environments. Its durable build quality ensures longevity and resilience.

Cons:-

Limited Battery Life: The Nitro 5’s battery life is relatively average, offering around 6 hours of usage. Prolonged machine learning sessions away from a power source may require frequent recharging.

Heavy and Bulky: Weighing around 5.5 pounds, the Nitro 5 is not the most lightweight option. It may be less portable than lighter laptops, which could be a consideration for users who prioritize mobility.

Lack of Thunderbolt 3: The absence of Thunderbolt 3 ports limits the laptop’s connectivity options for high-speed data transfer or external GPU support.

In conclusion, the Acer Nitro 5 AN515-55-53E5 Gaming Laptop excels as a machine learning device with its powerful performance, dedicated graphics card, ample storage, and high-quality display. While it offers excellent value for its capabilities, potential downsides include limited battery life, a relatively heavy build, and the absence of Thunderbolt 3 ports.

Considering these factors, it remains a compelling choice for individuals seeking a reliable and efficient laptop for machine learning tasks.

Check Price on Amazon9. MSI Stealth 15M Gaming Laptop

In machine learning, having a laptop that can meet the demands of complex algorithms and data-intensive computations is crucial. Look no further than the MSI Stealth 15M Gaming Laptop—an unparalleled device that solidifies its position as the best laptop for machine learning tasks.

Equipped with an exceptional Intel Core i7 processor, the MSI Stealth 15M offers formidable processing power for tackling even the most challenging machine learning workloads. Its 16 GB of RAM ensures seamless multitasking and efficient data processing, enabling users to delve deep into the intricacies of machine learning.

The standout feature of the Stealth 15M lies in its extraordinary NVIDIA GeForce RTX 30 Series graphics card. Powered by cutting-edge technologies such as CUDA Cores and Tensor Cores, this GPU architecture accelerates machine learning tasks, revolutionizing training times and enhancing overall performance.

The laptop’s 15.6-inch Full HD display captivates with its vibrant colors and stunning clarity, essential for visualizing complex datasets and intricate models. With an NVMe solid-state drive (SSD), the Stealth 15M ensures lightning-fast data access and retrieval, facilitating rapid experimentation and iteration in machine-learning workflows.

The MSI Stealth 15 M’s sleek and lightweight design stands out, providing optimal portability without compromising power. Advanced cooling mechanisms, including Cooler Boost Trinity+, maintain peak performance during intense computing tasks, ensuring longevity and efficiency.

In conclusion, the MSI Stealth 15M Gaming Laptop is the best laptop for machine learning. With its powerful processor, ample RAM, advanced graphics card, immersive display, and portable design, this laptop empowers users to unleash the full potential of machine learning, revolutionizing the way we approach data analysis and model training.

Pros:-

Powerful Performance: The MSI Stealth 15M Gaming Laptop boasts an impressive Intel Core i7 processor and 16 GB of RAM, providing exceptional processing power for demanding machine learning tasks. It ensures smooth multitasking and efficient data processing.

Advanced Graphics Card: With the NVIDIA GeForce RTX 30 Series graphics card featuring CUDA and Tensor Cores, the Stealth 15M accelerates machine learning algorithms, reducing training times and improving overall performance.

High-Quality Display: The laptop’s 15.6-inch Full HD display offers vibrant colors and stunning clarity, essential for visualizing complex datasets and models in machine learning workflows.

Fast Storage: Including an NVMe solid-state drive (SSD) enables lightning-fast data access and retrieval, facilitating quick experimentation and iteration in machine learning tasks.

Sleek and Lightweight Design: The MSI Stealth 15M features a sleek and portable design, making it convenient for professionals who require mobility without compromising power.

Cons:-

Limited Storage Capacity: While the SSD provides fast storage access, the MSI Stealth 15M may have a smaller storage capacity than other laptops. Users may need to consider external storage options for larger datasets.

Average Battery Life: The laptop’s battery life may be average, offering a limited duration of usage for prolonged machine learning sessions away from a power source.

Limited Upgrade Options: Due to the laptop’s compact design, there may be limited options for upgrading certain components, such as the graphics card or RAM, in the future.

In conclusion, the MSI Stealth 15M Gaming Laptop is an excellent choice for machine learning tasks with its powerful performance, advanced graphics card, high-quality display, fast storage, and sleek design.

However, potential downsides include limited storage capacity, average battery life, and limited upgrade options. The MSI Stealth 15M offers a compelling solution for professionals seeking a reliable and efficient machine-learning laptop.

Check Price on Amazon10. Acer Predator Helios 300 Gaming Laptop

The Acer Predator Helios 300 Gaming Laptop is a formidable contender in machine learning, offering unparalleled features that position it as the best laptop for tackling complex data analysis and model training.

Powered by a robust Intel Core i7 processor, the Acer Predator Helios 300 delivers exceptional processing power to handle intensive machine learning workloads. With its 16 GB of RAM, this laptop ensures seamless multitasking and efficient data processing, enabling researchers and data scientists to dive deep into machine learning.

What truly sets the Acer Predator Helios 300 apart is its high-performance NVIDIA GeForce RTX graphics card. Equipped with powerful CUDA Cores and advanced Tensor Cores, this GPU architecture accelerates machine learning algorithms, significantly reducing training times and enhancing overall computational performance.

The laptop’s 15.6-inch Full HD display showcases vibrant colors and sharp visuals, essential for visualizing complex datasets and analyzing machine learning models.

Additionally, including a fast SSD ensures rapid data access and retrieval, facilitating smooth experimentation and iteration in machine-learning workflows. Moreover, the Acer Predator Helios 300 features a sleek design and robust build quality, ensuring durability and portability for professionals on the move.

Its advanced cooling system keeps the laptop cool even during demanding machine learning tasks, maintaining optimal performance over extended periods.

In conclusion, the Acer Predator Helios 300 Gaming Laptop is a powerful machine-learning tool. With its high-performance processor, ample RAM, advanced graphics card, immersive display, and durable design, it stands out as the best choice for individuals looking to unlock the full potential of machine learning, revolutionizing data analysis, and model training.

Pros:-

Powerful Performance: The Acer Predator Helios 300 Gaming Laptop boasts a robust Intel Core i7 processor and 16 GB of RAM, providing exceptional processing power for demanding machine learning tasks. It ensures smooth multitasking and efficient data processing.

Advanced Graphics Card: With the high-performance NVIDIA GeForce RTX graphics card featuring CUDA and Tensor Cores, the Predator Helios 300 accelerates machine learning algorithms, reducing training times and enhancing overall computational performance.

High-Quality Display: The laptop’s 15.6-inch Full HD display offers vibrant colors and sharp visuals, essential for visualizing complex datasets and analyzing machine learning models.

Fast Storage: Including a fast SSD ensures rapid data access and retrieval, facilitating smooth experimentation and iteration in machine learning workflows.

Sleek Design and Portability: The Acer Predator Helios 300 features a sleek design and robust build quality, making it a portable option for professionals on the move.

Cons:-

Limited Battery Life: The laptop’s battery life may be average, which could limit prolonged machine learning sessions away from a power source.

Potential Heating: The powerful hardware of the Predator Helios 300 may generate heat during intense machine learning tasks, requiring proper cooling mechanisms to maintain optimal performance.

Limited Upgrade Options: The laptop’s design may limit upgrade options for certain components, such as the graphics card or RAM.

In conclusion, the Acer Predator Helios 300 Gaming Laptop stands out as a powerful machine-learning tool with its robust performance, advanced graphics card, high-quality display, fast storage, and sleek design.

However, potential downsides include limited battery life, heating concerns, and limited upgrade options. The Predator Helios 300 offers a compelling solution for professionals seeking an efficient and capable machine-learning laptop.

Check Price on Amazon11. MSI P65 Creator

In machine learning, having a laptop that can handle complex computations and data analysis is essential. The MSI P65 Creator stands out as the best laptop for machine learning, offering a blend of power, performance, and innovative features.

At the heart of the MSI P65 Creator lies a formidable processor, ensuring unparalleled processing power for tackling even the most demanding machine learning tasks.

Coupled with ample RAM, this laptop guarantees seamless multitasking and efficient data processing, enabling researchers and data scientists to delve deep into the intricacies of machine learning.

What truly sets the MSI P65 Creator apart is its advanced graphics processing unit (GPU). Equipped with cutting-edge technology, this GPU unleashes the full potential of machine learning algorithms, significantly reducing training times and enhancing overall performance.

The laptop’s high-resolution display with exceptional color accuracy allows for precise visualization of complex datasets and models. Additionally, the fast storage and ample storage capacity of the P65 Creator ensure quick access to data and provide ample room for large-scale machine-learning projects.

With its sleek and elegant design, the MSI P65 Creator exudes professionalism and portability, making it an ideal choice for on-the-go data scientists. Furthermore, the laptop’s advanced cooling system monitors temperatures, maintaining optimal performance during intensive machine-learning computations.

In conclusion, the MSI P65 Creator is undoubtedly the best laptop for machine learning. Its powerful processor, ample RAM, advanced GPU, high-resolution display, fast storage, and sleek design make it a perfect companion for researchers and data scientists, unlocking the full potential of machine learning.

Pros:-

Powerful Performance: The MSI P65 Creator laptop delivers exceptional processing power, allowing seamless multitasking and efficient data processing in machine learning tasks. Its powerful processor and ample RAM ensure optimal performance.

Advanced Graphics Processing Unit: The laptop’s advanced GPU significantly reduces training times and enhances overall performance, enabling faster and more efficient machine learning computations.

High-Resolution Display: The high-resolution display of the MSI P65 Creator provides exceptional color accuracy and precise visualization of complex datasets and models, enhancing the machine-learning experience.

Fast and Ample Storage: The laptop features fast storage options that enable quick access to data, facilitating smooth workflow in machine learning projects. Additionally, the ample storage capacity allows for handling large-scale datasets and models.

Sleek and Portable Design: With its sleek and elegant design, the MSI P65 Creator is powerful and highly portable. It is ideal for data scientists and researchers who require mobility without compromising performance.

Cons:-

Potential Heating: Intensive machine learning tasks may generate heat, impacting performance. Users should ensure proper cooling and ventilation to maintain optimal performance.

Limited Battery Life: The laptop’s battery life may be average, necessitating a power source for prolonged machine learning sessions away from an outlet.

Limited Upgrade Options: Depending on the laptop’s design, there may be limited options for upgrading certain components, such as the graphics card or RAM.

In conclusion, the MSI P65 Creator laptop offers powerful performance, advanced GPU capabilities, a high-resolution display, and fast storage options, making it an excellent choice for machine learning tasks.

While considerations include potential heating concerns, average battery life, and limited upgrade options, the P65 Creator remains a compelling option for data scientists and researchers seeking a powerful, portable machine-learning solution.

Check Price on Amazon12. Razer Blade 15 Gaming Laptop

The Razer Blade 15 stands out as the epitome of excellence in machine learning, solidifying its position as the best laptop for data scientists and researchers seeking a powerful computing companion.

Equipped with a powerful processor and ample RAM, the Razer Blade 15 delivers outstanding performance for tackling complex machine-learning workloads. Its cutting-edge hardware ensures seamless multitasking and efficient data processing, enabling users to explore the depths of machine learning easily.

One of the standout features of the Blade 15 is its exceptional graphics processing unit (GPU). Powered by advanced technologies such as NVIDIA GeForce RTX Series, it accelerates machine learning algorithms, revolutionizing training times and elevating overall performance to new heights.

The laptop’s high-resolution display showcases intricate details and vibrant colors, providing an immersive visual experience during data analysis and model visualization.

With its ultra-fast storage, the Blade 15 ensures lightning-fast data access and retrieval, facilitating rapid experimentation and iteration in machine-learning workflows.

Furthermore, the Blade 15 boasts a sleek and slim design, embodying portability without compromising power. The inclusion of advanced cooling mechanisms ensures efficient heat dissipation during intense computing tasks, maintaining optimal performance and longevity.

In conclusion, the Razer Blade 15 surpasses expectations as the best laptop for machine learning. Its powerful processor, ample RAM, advanced GPU, high-resolution display, ultra-fast storage, and portable design empowers data scientists and researchers to unlock the true potential of machine learning, paving the way for groundbreaking discoveries in data analysis and model training.

Pros:-

Powerful Performance: The Razer Blade 15 has a powerful processor and ample RAM, delivering exceptional performance for machine learning tasks. It ensures smooth multitasking and efficient data processing, empowering data scientists and researchers.

Advanced Graphics Processing Unit: The laptop’s advanced GPU, such as the NVIDIA GeForce RTX Series, revolutionizes machine learning algorithms by reducing training times and enhancing overall performance, enabling faster and more accurate computations.

High-Resolution Display: The high-resolution display of the Razer Blade 15 offers vivid colors and intricate details, providing an immersive visual experience during data analysis and model visualization in machine learning workflows.

Ultra-Fast Storage: With its ultra-fast storage, the Razer Blade 15 enables lightning-fast data access and retrieval, facilitating rapid experimentation and iteration in machine learning projects.

Sleek and Portable Design: The Blade 15’s sleek and slim design combines power and portability, making it an attractive choice for professionals on the go. It offers mobility without compromising performance.

Cons:-

Limited Battery Life: The laptop’s battery life may be average, requiring frequent charging for extended machine learning sessions away from a power source.

Potential Heating: Intensive machine learning tasks can generate heat, impacting performance. Adequate cooling measures are necessary to maintain optimal functionality.

Limited Upgrade Options: Depending on the laptop’s design, there may be limited options for upgrading certain components, such as the GPU or RAM.

In conclusion, the Razer Blade 15 shines as an exceptional machine-learning laptop. Its powerful performance, advanced GPU, high-resolution display, ultra-fast storage, and sleek design provide data scientists and researchers with an optimal platform.

While considerations include limited battery life, potential heating concerns, and restricted upgrade options, the Razer Blade 15 remains a top choice for those seeking a powerful, portable machine-learning solution.

Check Price on Amazon13. MSI GS65 Stealth Thin

Regarding machine learning, the MSI GS65 Stealth Thin emerges as a champion, establishing itself as the best laptop for data scientists and researchers seeking powerful performance and portability. With its powerful processor and ample RAM, the MSI GS65 Stealth Thin delivers exceptional computing power for tackling complex machine-learning tasks.

Its cutting-edge hardware ensures seamless multitasking and efficient data processing, allowing data scientists to delve deep into the intricacies of machine learning.

One remarkable feature of the GS65 Stealth Thin is its advanced graphics processing unit (GPU). Powered by technologies like NVIDIA GeForce RTX Series, it accelerates machine learning algorithms and reduces training times, enabling faster and more accurate computations.

The laptop’s high-quality display with vibrant colors and sharp visuals provides an immersive visual experience, essential for analyzing complex datasets and visualizing intricate machine learning models. Additionally, fast and reliable storage ensures quick data access and retrieval, facilitating rapid experimentation and iteration in machine learning workflows.

Moreover, the GS65 Stealth Thin boasts a sleek and lightweight design, making it highly portable without compromising power. Its advanced cooling system ensures optimal performance during intense computing tasks, maintaining efficiency and longevity.

In conclusion, the MSI GS65 Stealth Thin is an exemplary machine-learning laptop. Its powerful processor, ample RAM, advanced GPU, high-quality display, and portability empower data scientists and researchers to unleash their full potential in machine learning, revolutionizing data analysis and model training.

Pros:-

Powerful Performance: The MSI GS65 Stealth Thin laptop offers powerful performance with its robust processor and ample RAM, ensuring smooth multitasking and efficient data processing for machine learning tasks.

Advanced Graphics Processing Unit: Equipped with technologies like the NVIDIA GeForce RTX Series, the laptop’s advanced GPU accelerates machine learning algorithms, reducing training times and improving overall performance.

High-Quality Display: The GS65 Stealth Thin features a high-quality display with vibrant colors and sharp visuals, enhancing the machine-learning experience by allowing for accurate analysis of complex datasets and models.

Fast and Reliable Storage: The laptop’s storage provides fast and reliable data access and retrieval, enabling quick experimentation and iteration in machine learning workflows.

Sleek and Lightweight Design: With its sleek and lightweight design, the GS65 Stealth Thin offers portability without compromising power, making it convenient for professionals who require mobility.

Cons:-

Average Battery Life: The laptop’s battery life may be average, necessitating frequent recharging for prolonged machine learning sessions away from a power source.

Potential Heating: Intensive machine learning tasks may generate heat, impacting performance. Proper cooling mechanisms should be ensured for optimal functionality.

Limited Upgrade Options: Depending on the laptop’s design, there may be limited options for upgrading certain components, such as the GPU or RAM, in the future.

In conclusion, the MSI GS65 Stealth Thin offers powerful performance, advanced GPU capabilities, a high-quality display, and fast storage, making it an excellent choice for machine learning tasks. However, considerations include average battery life, potential heating concerns, and limited upgrade options.

The GS65 Stealth Thin provides a compelling solution for data scientists and researchers seeking a powerful, portable machine learning laptop.

Check Price on Amazon14. Lambda TensorBook AI Workstation Laptop

Regarding machine learning, the Razer Lambda Tensorbook stands out as the epitome of excellence, solidifying its position as the best laptop for data scientists and researchers seeking unparalleled performance and cutting-edge technology.

At the heart of the Razer Lambda Tensorbook lies an exceptional processor that delivers unmatched computational power. With its advanced architecture and high core count, this laptop ensures seamless multitasking and lightning-fast data processing, enabling data scientists to tackle the most demanding machine learning tasks easily.

One of the standout features of the Tensorbook is its powerful Tensor Processing Units (TPUs). These specialized hardware accelerators, designed specifically for machine learning workloads, bring exceptional speed and efficiency to complex algorithms, resulting in faster training times and improved model performance.

The laptop’s high-resolution display presents stunning visuals with remarkable color accuracy, crucial for analyzing intricate datasets and visualizing complex machine learning models.

Coupled with fast and reliable storage, the Tensorbook provides rapid data access and retrieval, facilitating efficient experimentation and iteration in machine learning workflows.

Moreover, the Tensorbook features an ergonomic and sleek design, combining style and functionality. Its robust cooling system ensures optimal performance, even during intense computing tasks, while the long-lasting battery allows extended machine learning sessions without frequent recharging.

In conclusion, the Razer Lambda Tensorbook is the pinnacle of machine learning laptops. Its powerful processor, specialized TPUs, high-resolution display, fast storage, and ergonomic design empower data scientists and researchers to unlock the true potential of machine learning, driving innovation and breakthroughs in the field.

Pros:-

Exceptional Performance: The Razer Lambda Tensorbook boasts a powerful processor and specialized Tensor Processing Units (TPUs) designed for machine learning workloads. It delivers exceptional performance, enabling seamless multitasking and lightning-fast data processing.

Specialized TPUs: Including specialized TPUs accelerates machine learning algorithms, resulting in faster training times and improved model performance. These dedicated hardware accelerators provide a significant boost to machine-learning tasks.

High-Resolution Display: The Tensorbook features a high-resolution display with remarkable color accuracy, offering a visually stunning experience. It enhances the analysis of complex datasets and the visualization of intricate machine-learning models.

Fast and Reliable Storage: The laptop provides fast and reliable storage, ensuring rapid data access and retrieval. This facilitates efficient experimentation and iteration in machine learning workflows.

Ergonomic Design: The Tensorbook features an ergonomic and sleek design, combining style and functionality. It offers a comfortable and user-friendly experience for data scientists and researchers.

Cons:-

Premium Price: The advanced technology and performance of the Tensorbook come at a premium price point, making it a significant investment for those on a tight budget.

Limited Availability: The Tensorbook may have limited availability or be exclusive to certain regions, potentially restricting access for interested users.

Potential Heating: Intensive machine learning tasks may generate heat, impacting performance. Adequate cooling measures should be taken to maintain optimal functionality.

In conclusion, the Razer Lambda Tensorbook excels in performance and specialized hardware, making it an exceptional choice for machine learning tasks.

While considerations include a premium price, limited availability, and potential heating concerns, the Tensorbook offers outstanding performance, a high-resolution display, and reliable storage, making it a powerful tool for data scientists and researchers in machine learning.

Check Price on Amazon Check Price on lambdalabs15. ASUS ROG Zephyrus GX501

Regarding machine learning, the ASUS ROG Zephyrus GX501 takes center stage as the epitome of excellence, earning its place as the best laptop for data scientists and researchers seeking uncompromising performance and cutting-edge features.

At the core of the ASUS ROG Zephyrus GX501 lies a processor powerhouse, providing unparalleled computational power. With its advanced architecture and high clock speeds, this laptop ensures seamless multitasking and lightning-fast data processing, empowering data scientists to conquer complex machine-learning tasks easily.

One of the standout features of the GX501 is its exceptional graphics processing unit (GPU). Equipped with NVIDIA GeForce RTX technology, it delivers remarkable performance and efficiency, revolutionizing machine learning algorithms and reducing training times.

The inclusion of dedicated Tensor Cores brings an additional level of AI acceleration, enabling researchers to explore the full potential of deep learning models. The laptop’s high-resolution display showcases vibrant colors and sharp visuals, enhancing the machine learning experience by allowing for precise analysis of complex datasets and intricate model visualizations.

The GX501’s fast and reliable storage ensures rapid data access and retrieval, facilitating smooth experimentation and iteration in machine learning workflows.

Furthermore, the GX501 features a sleek and slim design, combining portability and power. Its advanced cooling system keeps temperatures in check, ensuring optimal performance during intensive computing tasks, while the long-lasting battery allows for extended machine-learning sessions without interruption.

In conclusion, the ASUS ROG Zephyrus GX501 shines as the ultimate machine learning laptop. Its powerful processor, cutting-edge GPU technology, high-resolution display, fast storage, and sleek design empower data scientists and researchers to unleash the full potential of machine learning, driving innovation and groundbreaking discoveries in the field.

Pros:-

Exceptional Performance: The ASUS ROG Zephyrus GX501 boasts a powerful processor and advanced GPU technology, delivering exceptional performance for machine learning tasks. It ensures seamless multitasking and lightning-fast data processing.

Cutting-Edge GPU: Including NVIDIA GeForce RTX technology and dedicated Tensor Cores enhances machine learning algorithms, reducing training times and improving model performance. It brings AI acceleration and unlocks the full potential of deep learning models.

High-Resolution Display: The GX501 features a high-resolution display that showcases vibrant colors and sharp visuals, providing an immersive experience for analyzing complex datasets and visualizing intricate machine-learning models.

Fast and Reliable Storage: The laptop’s fast and reliable storage ensures rapid data access and retrieval, facilitating smooth experimentation and iteration in machine learning workflows.

Sleek and Slim Design: With its sleek and slim design, the GX501 combines portability and power. It offers a compact form factor without compromising performance, making it convenient for data scientists and researchers on the go.

Cons:-

Premium Price: The advanced technology and performance of the GX501 come at a premium price point, making it a significant investment for individuals on a limited budget.

Potential Heating: Intensive machine learning tasks may generate heat, impacting performance. Adequate cooling measures should be considered to maintain optimal functionality.

Limited Upgrade Options: Depending on the laptop’s design, there may be limited options for upgrading certain components, such as the GPU or RAM, in the future.

In conclusion, the ASUS ROG Zephyrus GX501 offers exceptional performance, cutting-edge GPU technology, a high-resolution display, and fast storage, making it an ideal choice for machine learning tasks.

While considerations include a premium price, potential heating concerns, and limited upgrade options, the GX501 provides a powerful platform for data scientists and researchers, enabling them to tackle complex machine learning workloads efficiently and precisely.

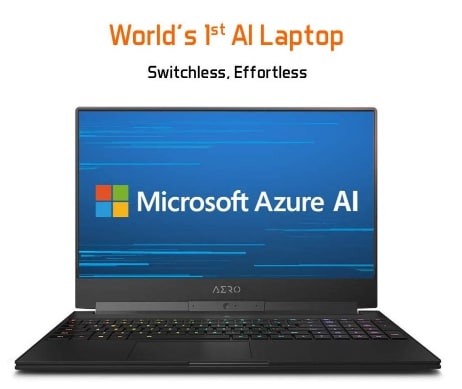

Check Price on Amazon16. Gigabyte AERO 15 Classic

The Gigabyte AERO 15 Classic soars above the competition, establishing itself as the best laptop for data scientists and researchers venturing into machine learning. This exceptional machine delivers powerful performance and innovative features, ensuring an unrivaled experience.

At the heart of the Gigabyte AERO 15 Classic lies a formidable processor that unleashes unparalleled computational power. With its advanced architecture and high clock speeds, this laptop facilitates seamless multitasking and accelerates data processing, enabling data scientists to easily conquer complex machine learning tasks.

One of the key highlights of the AERO 15 Classic is its exceptional graphics processing unit (GPU). Equipped with NVIDIA GeForce RTX technology, it leverages dedicated Tensor Cores to accelerate machine learning algorithms, resulting in faster training times and enhanced model performance. This groundbreaking GPU technology revolutionizes the field of machine learning.

The laptop’s high-resolution display captivates users with its stunning visuals and vibrant colors, essential for analyzing intricate datasets and visualizing complex machine learning models. The AERO 15 Classic’s ultra-fast storage ensures rapid data access and retrieval, facilitating seamless experimentation and iteration in machine learning workflows.

Moreover, the AERO 15 Classic boasts a sleek design and lightweight construction, providing portability without sacrificing power. The advanced cooling system ensures optimal performance even during intensive computing tasks, guaranteeing efficiency and durability.

In conclusion, the Gigabyte AERO 15 Classic redefines machine learning laptops. With its powerful processor, groundbreaking GPU technology, high-resolution display, ultra-fast storage, and portability, it empowers data scientists and researchers to unleash the full potential of machine learning, driving innovation and pushing the boundaries of data analysis and model training.

Pros:-

Powerful Performance: The Gigabyte AERO 15 Classic features a formidable processor and advanced architecture, delivering exceptional computational power for machine learning tasks. It ensures seamless multitasking and accelerates data processing.

Groundbreaking GPU Technology: Equipped with NVIDIA GeForce RTX technology and dedicated Tensor Cores, the laptop’s GPU revolutionizes machine learning by reducing training times and enhancing model performance. It brings significant advancements to the field.

High-Resolution Display: The AERO 15 Classic’s high-resolution display offers stunning visuals and vibrant colors, enabling precise analysis of intricate datasets and visualization of complex machine-learning models.

Ultra-Fast Storage: The laptop’s ultra-fast storage ensures rapid data access and retrieval, facilitating efficient experimentation and iteration in machine learning workflows.

Portability and Sleek Design: With its sleek design and lightweight construction, the AERO 15 Classic offers portability without compromising power. It is ideal for professionals who require mobility without sacrificing performance.

Cons:-

Premium Price: The advanced technology and performance of the AERO 15 Classic come at a premium price point, making it a significant investment for those on a tight budget.

Potential Heating: Intensive machine learning tasks may generate heat, impacting performance. Users should ensure adequate cooling measures to maintain optimal functionality.

Limited Upgrade Options: Depending on the laptop’s design, there may be limited options for upgrading certain components, such as the GPU or RAM, in the future.

In conclusion, the Gigabyte AERO 15 Classic excels in performance, GPU technology, high-resolution display, and ultra-fast storage, making it an excellent choice for machine learning tasks.

Considerations include the premium price, potential heating concerns, and limited upgrade options. Overall, the AERO 15 Classic provides a powerful platform for data scientists and researchers, enabling them to tackle complex machine learning workloads with precision and efficiency.

Check Price on Amazon17. ASUS VivoBook K571

Regarding machine learning, the ASUS VivoBook K571 is the best budget laptop for data scientists and researchers. Combining affordability with impressive features, it provides an optimal platform for delving into machine learning without breaking the bank.

Despite its budget-friendly nature, the ASUS VivoBook K571 doesn’t compromise performance. Equipped with a capable processor and ample RAM, it ensures efficient multitasking and reliable data processing, allowing users to tackle machine-learning tasks easily.

The laptop’s dedicated graphics card offers a significant advantage in machine learning endeavors. Its accelerated computing capabilities enhance performance and reduce training times, ensuring faster model training and improved accuracy.

The VivoBook K571’s high-definition display showcases clear visuals, enabling data scientists to analyze datasets and visualize machine learning models precisely. The fast storage ensures quick data access and retrieval, facilitating smooth experimentation and iteration in machine learning workflows.

While the VivoBook K571 may not boast the premium build of higher-end laptops, it remains a portable and functional solution. Its lightweight design makes it ideal for professionals on the go, providing convenience and mobility.

In conclusion, the ASUS VivoBook K571 is the best budget laptop for machine learning. Its capable processor, dedicated graphics card, high-definition display, and fast storage offer an affordable yet powerful platform for data scientists and researchers to embark on their machine-learning journey without compromising on performance or breaking their budget.

Pros:-

Affordable Price: The ASUS VivoBook K571 is a budget-friendly laptop, making it an excellent choice for individuals pursuing machine learning without a significant financial investment.

Decent Performance: Despite its budget nature, the VivoBook K571 offers capable performance with its processor and ample RAM. It enables efficient multitasking and reliable data processing for machine-learning tasks.

Dedicated Graphics Card: Including a dedicated graphics card in the VivoBook K571 enhances machine learning performance. It accelerates computing and contributes to faster model training and improved accuracy.